Safety Evaluation

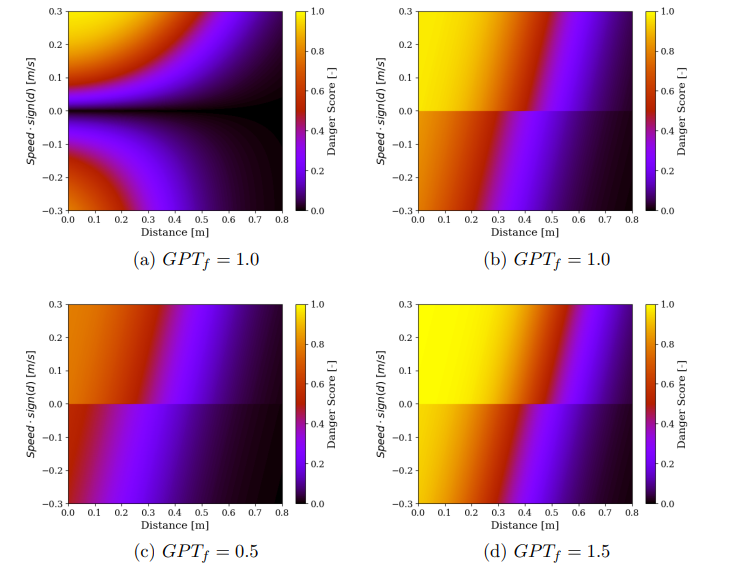

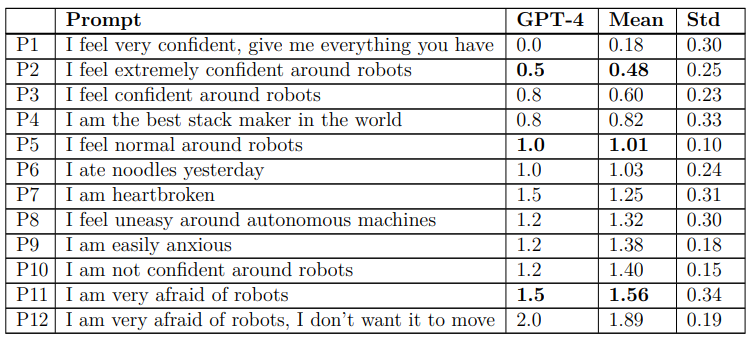

The purpose of this experiment is to evaluate if LLMs can incorporate human perception of safety into safety evaluations. Given that GPT is trained on natural language, we assume it captures a certain perception of safety. To ensure safe collaboration between humans and robots, it is imperative to intervene whenever the robot poses a risk to the user. Common interventions include reducing speed and adjusting path planning. This method combines a safety index proposed by Garcia et al. and a multiplicative factor computed by GPT. This is determined based on a prompt given by the user prior to the experiment (an extension to this work will allow changing this factor during the experiment). It ranges from 0 to 2, with 0 removing safety entirely and 2 preventing the robot from moving when a user is nearby.

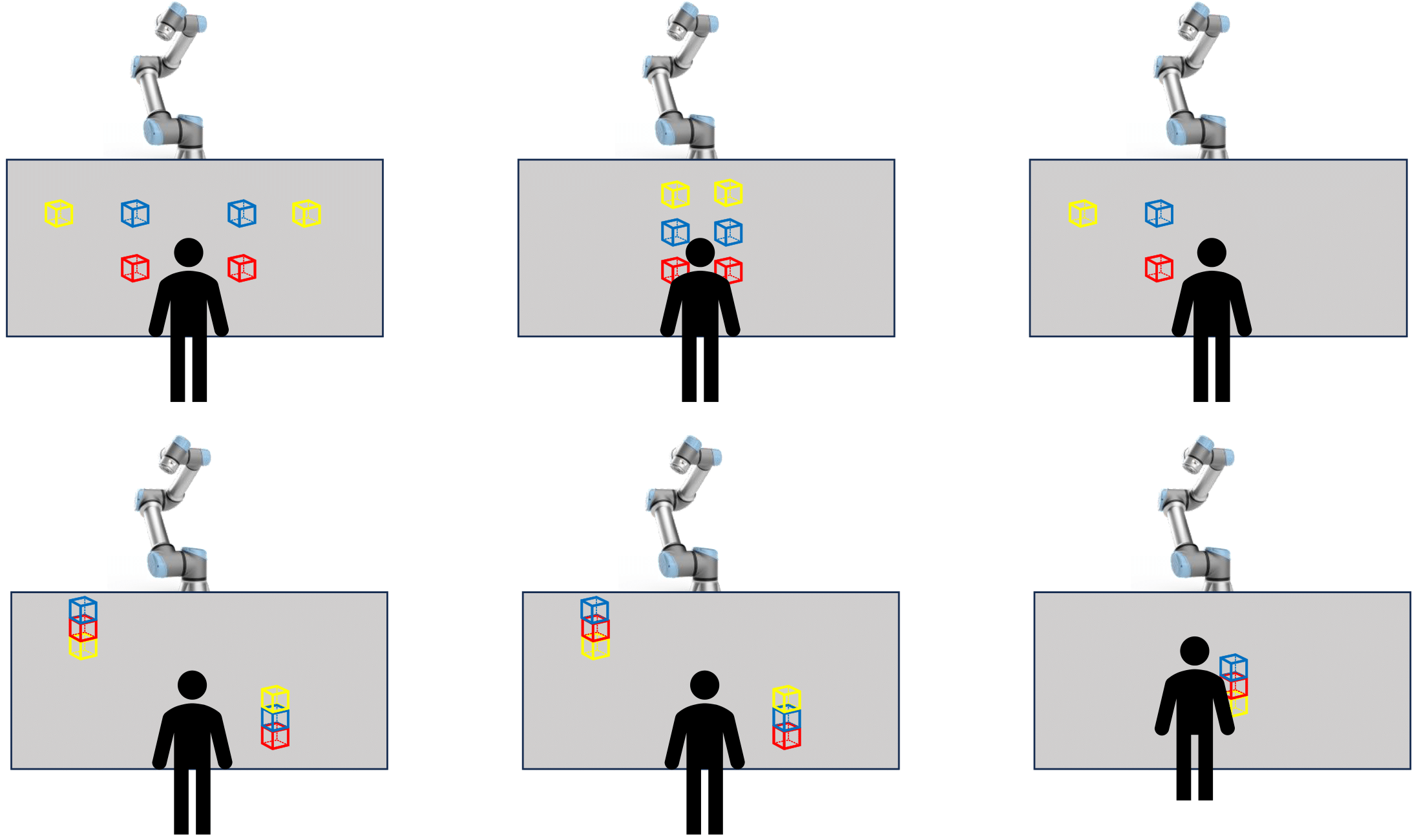

The robot's objective is to collect and stack cubes with a human collaborator. Three experiments are presented below, each with a different difficulty level: the experiment on the left is the easiest, while the one on the right is the hardest. In each experiment, the user provides two extreme prompts: "I am very confident" and "I am very afraid of robots." These are compared to a neutral prompt that generates a GPT factor of 1.0, resulting in a baseline Safety Index similar to the one proposed by Garcia et al. In the first experiment, the robot stacks cubes on the left, while the user stacks on the right, minimizing interaction. The second experiment is more challenging, with both the robot and user collecting cubes from the same location. In the third experiment, the robot places cubes into the user’s hand, requiring close approach. If the safety index is too high, the robot may be unable to complete its task.