Decision Making

An additional objective of the system is to assess the viability of utilizing LLMs for decision-making in HRC by providing a streamlined coding paradigm. This approach leverages the emergent properties of LLMs by reducing reliance on explicit conditions and instead using natural language descriptions. The hypothesis is assessed through the decision-making module. When the Danger Score (DS) surpasses a threshold of 0.8, the robot stops. Then, GPT-4 decides the next action: whether to wait, withdraw, or change the objective. By adding input to the prompt, one can influence the decision-making process.

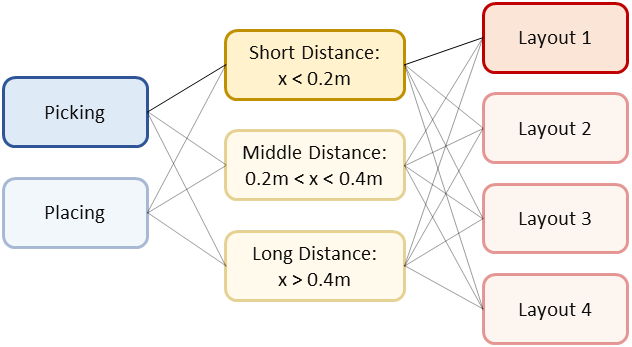

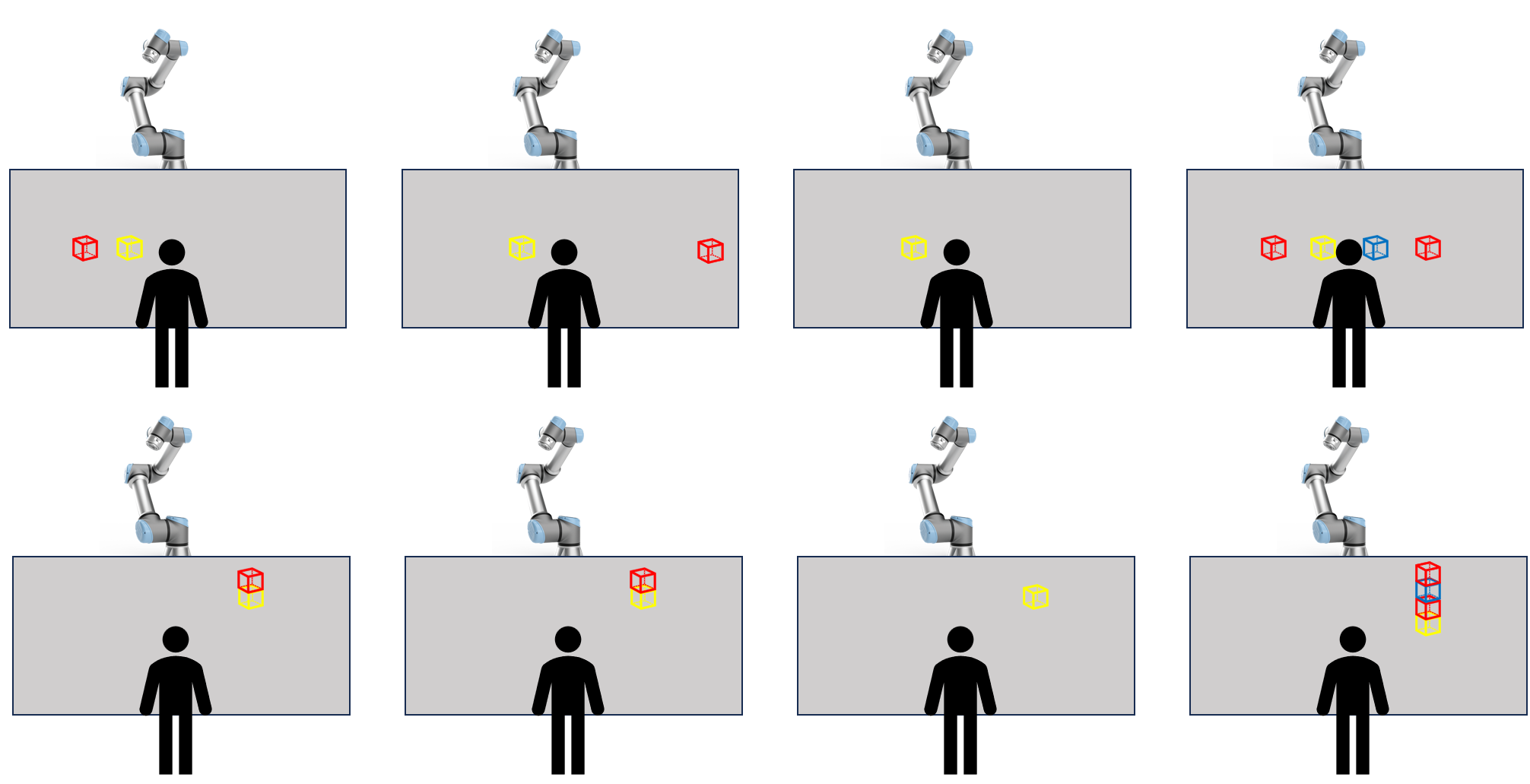

To assess this influence and evaluate its accuracy, the robot is halted in 24 different situations. These situations vary the input parameters used in decision-making. Text constraints imposed on input variables allow the evaluation of accuracy by ensuring output alignment with these constraints. Each parameter combination is examined, resulting in 24 experiments. The first varying parameter is the robot's action: either picking or placing a cube. Distances between the robot’s end-effector and the nearest point on the human body are categorized as short (dist < 0.2m), middle (0.2m < dist < 0.4m), or long (dist > 0.4m). The layouts below aim to influence GPT-4's decision by adjusting the position and number of other blocks available for pick-up on the table.

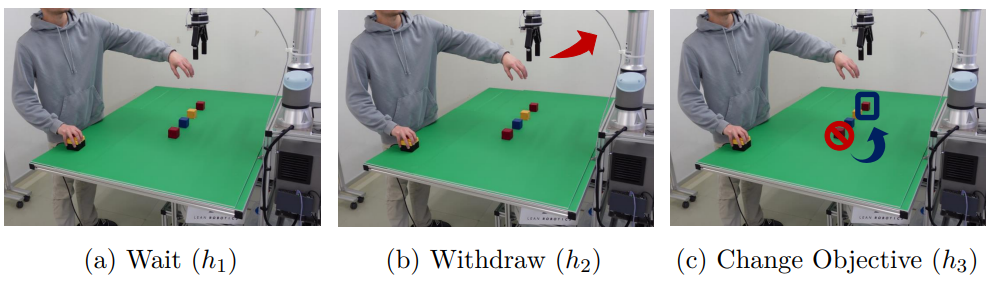

GPT-4 receives the current state information and, based on this, decides which action is most suited for the situation. The decision made by GPT-4 is displayed as a prompt in the container above. From left to right: wait, withdraw, and change Objective.